Octoparse simulates human web browsing behavior like opening a web page, logging into an account, entering a text, pointing-and-clicking the web element, etc. Octoparse provides a visual operation pane, which is very user friendly and straightforward.

You can run your extraction project either on your own machines (Local Extraction) or in the cloud (Cloud Extraction). Its remarkable features such as filling out forms, entering a search term into the textbox, etc., would make it much easier to extract web data. Octoparse simulates human operation to interact with web pages. There are various export formats of your choice like CSV, EXCEL, HTML, TXT, and databases (MySQL, SQL Server, and Oracle).

#OCTOPARSE LINKEDIN WINDOWS#

Being a Windows application, Octoparse works well for static and dynamic websites, including those whose web pages are using Ajax. provides high speed data collection, performing up to 10 concurrent threads. The extraction rule would tell Octoparse: which website is to be open where is the data you plan to crawl, etc. It's an easy-to-use web scraping tools that collects data from the web.Ĭrawlers run in Octoparse are determined by the extraction rules configured.

#OCTOPARSE LINKEDIN SOFTWARE#

I suggest you guys check the websites you plan to crawl for any Terms of Service clauses related to scraping of their intellectual property.Īnother reason is that you don’t set Ajax time out.Octoparse is a free client-side Windows web scraping software that turns unstructured or semi-structured data from websites into structured data sets, no coding necessary. The reason why I took LinkedIn for example is to show that Octoparse is capable of grabbing different kinds of websites. You may risk your account being shut down or banned if you violate the agreement. In LinkedIn’s users agreement, there’s a term that shows you can’t scrape or copy profiles through any means including crawlers. One of the reasons is that you scrape the site at a disruptive or violated rate without regarding to the load you’re placing on its server. There are several reasons why you can’t get the result.

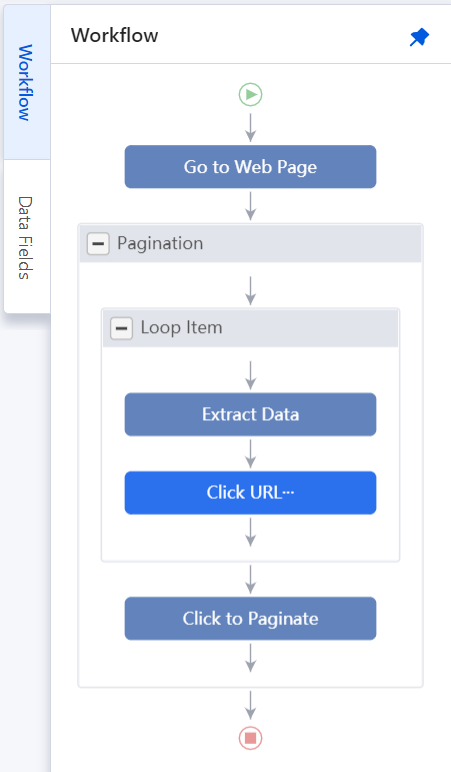

Many of you said you can’t get the result by following the previous LinkedIn tutorial. Now you can see the information I click on has been extracted. Once done configuring extraction rule, click “Next” and run the task on your computer by selecting “Local extraction”. You can choose a longer time than this)Ĭlick on information you want to grab. ➜Then “finish creating list”.➜ Click “Loop to process the list.”Ĭhoose “load page with Ajax”.( I choose 5 seconds. ➜ Then click “Save”.Ĭlick the search button, ➜ and select “Click an item”.Ĭlick the first profile image. ➜ Select “Create a list of items”.➜ “Add current item to the list”.➜“Continue to edit the list”.Ĭlick the second profile image.➜ “Add current item to the list”. If you signed in your LinkedIn account before, open the page in the built in browser directly.Ĭlick the search bar, ➜ and select “enter text value”. (Download my extraction task of this tutorial HERE just in case you need it.)